Personal AI Supercomputer Based on NVIDIA DGX Spark Platform for Deep Learning

Go to the product page and check out our offer!

The NVIDIA DGX Spark is the most anticipated AI workstation of the year - an extremely compact yet powerful unit designed for local artificial intelligence processing. The device offers 128 GB of LPDDR5X memory, 20 ARM cores, an integrated Blackwell GPU, and two 200 Gb/s RDMA network ports. In practice, this means the DGX Spark combines server-class performance with the mobility of a laptop - you can literally pack it into a backpack.

The inspiration for the Spark project was the legendary DGX-1 series, known from data centers. This time, NVIDIA has brought its philosophy to the desktop format, offering everything needed to create, train, and test AI models - locally, without the need to use the cloud.

The device, despite its size similar to a Mac Mini computer, significantly outperforms it in terms of performance. Inside we will find:

NVIDIA Grace Blackwell (GB10) processor - a combination of a 20-core ARM CPU (10x Cortex X925 + 10x Cortex A725) with a Blackwell GPU;

RTX 5070 class GPU, offering up to 1 PetaFLOPS of computing power for AI tasks;

128 GB of integrated LPDDR5X memory, enabling the processing of large language and generative models;

4 TB NVMe drive;

RDMA connectivity via ConnectX-7 (2 × QSFP56, 200 Gb/s);

Wi-Fi 7 and Bluetooth 5.3;

Power delivery via USB-C PD and power consumption from 45 to 130 W (up to 240 W under full load).

The whole system runs on DGX OS, which is a modified version of Ubuntu with built-in NVIDIA drivers and software.

The Elmark Automatyka offer includes the MSI EdgeXpert – an AI unit built based on NVIDIA DGX Spark technology.

This solution combines the computing power of the NVIDIA platform with the reliability and durability of MSI's design. EdgeXpert is the ideal choice for companies that want to:

develop and test local AI models,

process data at the network edge (Edge Computing),

deploy intelligent algorithms in factories, laboratories, R&D offices, or vision systems.

The device retains all the advantages of the original DGX Spark – massive power, energy efficiency, and clustering capabilities, while offering a solid design and full technical support from Elmark Automatyka.

MSI EdgeXpert

MSI EdgeXpert

Despite its power, the DGX Spark surprises with its low noise level - even during intense load, it does not exceed 40 dBA. Power consumption in idle mode is only about 45 W, and during stress tests, the device maintained power draw within the range of 120–130 W, remaining exceptionally quiet.

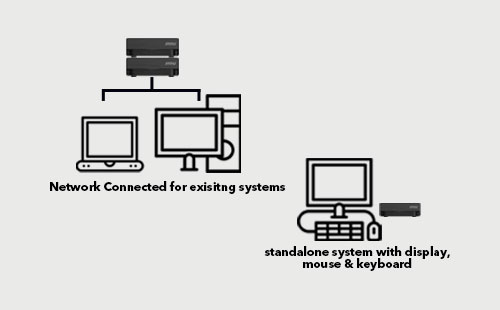

One of the biggest advantages of the DGX Spark is the ability to combine multiple units into a cluster without the need for additional switches. Thanks to the built-in NVIDIA ConnectX-7 card, two devices can be connected directly with a 200 Gb/s QSFP56 cable to create a shared computing space with a capacity of 256 GB of memory and two GB10 chips.

For larger configurations, 400 GbE switches can be used, allowing for the construction of clusters consisting of dozens of Sparks. This is a solution that until recently was reserved for HPC data centers, and now it fits on a desk.

DGX Spark is not just hardware — it is a complete ecosystem. NVIDIA provides the build.nvidia.com platform, which contains ready-made "recipes" and guides for AI developers, including how to:

run LLMs (Large Language Models), such as Qwen 32B or GPT-OSS 12B,

train image models using ComfyUI,

configure environments like VS Code, Cursor AI Workbench, Open WebUI,

connect multiple DGX Spark units into a cluster.

Thanks to the large amount of memory, the DGX Spark allows you to run models requiring even 60–65 GB of VRAM, which do not fit into typical GeForce or Radeon cards. This is a huge step forward for developers who want to test local models without using cloud infrastructure.

An ideal environment for training, testing, and prototyping models – fully compatible with the CUDA, PyTorch, and TensorFlow ecosystem.

DGX Spark can become a tool for management, allowing them to experiment with AI agents and process automation without having to invest in expensive servers. At a price of around $4,000, it is an accessible entry point into the world of local artificial intelligence.

Thanks to the compact chassis and USB-C power supply, the Spark can be taken on a trip and used as a mobile AI station – all you need is a laptop and a remote connection.

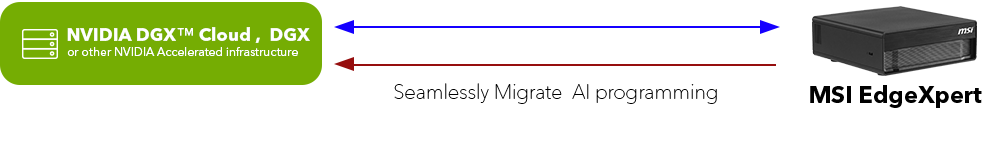

Leverage NVIDIA's AI software architecture to seamlessly scale solutions—from a desktop computer, through the NVIDIA DGX cloud, to other data centers and cloud infrastructures using NVIDIA accelerators. All with minimal code changes.

Leverage NVIDIA's AI software architecture to seamlessly scale solutions—from a desktop computer, through the NVIDIA DGX cloud, to other data centers and cloud infrastructures using NVIDIA accelerators. All with minimal code changes.NVIDIA DGX Spark marks a new era in the field of local AI systems. It combines computing power with a miniature form factor, offering users the ability to work with large AI models anywhere.

From the perspective of both engineers and companies – it is a groundbreaking tool that can democratize access to advanced AI technologies and enable rapid prototyping and deployment of intelligent solutions without the need to invest in costly server infrastructure.