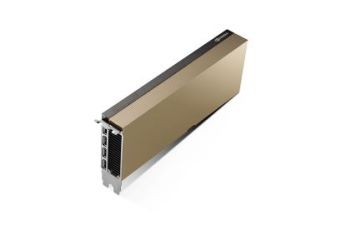

NVIDIA L40

GPU architecture NVIDIA Ada Lovelace

Universal GPU accelerator for AI, graphics, simulation and virtualization

18176 NVIDIA CUDA cores for advanced parallel computing

568 Tensor cores for AI model training and inference

142 RT cores for real-time rendering and ray tracing

48 GB GPU memory with ECC for working with large AI models and 3D scenes

Memory capacity up to 864 GB/s

Interface PCIe 4.0 x16

Passive cooling designed for servers and data centres

Maximum power consumption: 300 W

Free shipping from €300

Promocja cenowa na model HDR-15-5

Product intended for professional use only

NVIDIA L40

Description

NVIDIA L40 GPU accelerator for AI, graphics and digital twins

NVIDIA L40 Tensor Core GPU is a state-of-the-art accelerator designed to support advanced computing workloads in data centres. Based on the NVIDIA Ada Lovelace architecture, the chip combines high computational performance with advanced graphics rendering and artificial intelligence acceleration capabilities.

By combining GPU processing power, advanced Tensor cores, and ray tracing technology, the NVIDIA L40 enables demanding tasks such as training AI models, rendering 3D graphics, simulations, and creating digital twins.

NVIDIA Ada Lovelace Architecture

The NVIDIA L40GPU uses the NVIDIA Ada Lovelace architecture to deliver high performance for both AI computing tasks and compute-intensive graphics applications.

The accelerator features 18176 CUDA cores, 568 Tensor cores and 142 RT cores to accelerate graphics rendering, deep learning computing and simulation.

Additionally, the card offers 48 GB of GPU memory with ECC and a bandwidth of up to 864 GB/s to support very large AI models, complex 3D scenes and advanced engineering computing.

CUDA cores

Tensor cores

RT cores

GPU memory

GPU for artificial intelligence and data analytics

The NVIDIA L40 is widely used in artificial intelligence and data analytics environments where high performance parallel computing is required.

LLM and conversational systems

models combining image, text and video

AI using enterprise knowledge

big data and enterprise AI

image and video analytics

academic computing

GPU for graphics, simulation and digital twins

With its RT cores and advanced rendering capabilities, the NVIDIA L40 is widely used in simulation systems and digital twin creation platforms.

The accelerator is used in, among other applications:

- rendering 3D graphics

- virtualised manufacturing

- NVIDIA Omniverse platforms

- digital twins

- industrial simulations

- virtualised workstations

GPU accelerator for data centres

The NVIDIA L40 is designed for server systems and GPU clusters used in data centres. The PCIe 4.0 x16 interface provides high-bandwidth communication between the GPU and the server system.

With passive cooling and a maximum power consumption of 300 W, the card is designed for installation in professional GPU platforms supporting demanding computing workloads.

Technical Specification

| GPU Architecture | NVIDIA Ada Lovelace architecture |

|---|---|

| GPU Memory | 48GB GDDR6 with ECC |

| Memory Bandwidth | 864 GB/s |

| Interconnect Interface | PCIe Gen4 x16: 64 GB/s bi-directional |

| CUDA Cores (Ada Lovelace architecture) | 18,176 |

| NVIDIA Third-Generation RT Cores | 142 |

| NVIDIA Fourth-Generation Tensor Cores | 568 |

| RT Core Performance | 209 TFLOPS |

| FP32 | 90.5 TFLOPS |

| TF32 Tensor Core | 90.5 | 181** TFLOPS |

| BFLOAT16 Tensor Core | 181.05 | 362.1** TFLOPS |

| FP16 Tensor Core | 181.05 | 362.1** TFLOPS |

| FP8 Tensor Core | 362 | 724** TFLOPS |

| Peak INT8 Tensor | 362 | 724** TOPS |

| Peak INT4 Tensor | 724 | 1448** TOPS |

| Form Factor | 4.4” (H) x 10.5” (L), dual slot |

| Display Ports | 4 x DisplayPort 1.4a |

| Max Power Consumption | 300 W |

| Power Connector | 16-pin |

| Thermal Solution | Passive |

| Virtual GPU (vGPU) Software Support | Yes |

| vGPU Profiles Supported | See Virtual GPU Licensing Guide |

| NVENC | NVDEC | 3x | 3x (Includes AV1 Encode & Decode) |

| Secure Boot with Root of Trust | Yes |

| NEBS Ready | Level 3 |

| MIG Support | No |

| NVLink Support | No |

| Notes | * Preliminary specifications, subject to change. ** With Sparsity |