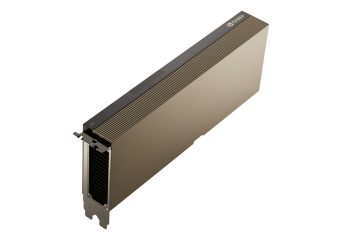

NVIDIA A30

GPU architecture NVIDIA Ampere

GPU accelerator optimised for AI, HPC and data centre computing

3584 NVIDIA CUDA cores for parallel computing

224 Tensor cores for accelerating AI models and deep learning

24GB of HBM2 memory with ECC for working with large data sets

Memory bandwidth up to 933 GB/s

Interface PCIe 4.0 x16 for high data throughput

Passive cooling designed for servers and computing systems

Maximum power consumption: 165 W

Free shipping from €300

Promocja cenowa na model HDR-15-5

Product intended for professional use only

NVIDIA A30

Description

NVIDIA A30 GPU accelerator for AI and data centre computing

NVIDIA A30 Tensor Core GPU is a high-performance accelerator designed to support demanding computing workloads in modern data centres. Based on the NVIDIA Ampere architecture, the chip delivers high performance for artificial intelligence, data analytics and high performance computing (HPC) tasks.

With its high memory bandwidth, power-efficient design and standard PCIe interface, the card can be easily deployed in existing server infrastructure. The NVIDIA A30 strikes the perfect balance between performance, scalability, and energy efficiency.

NVIDIA Ampere and Tensor Cores

The NVIDIA A30 accelerator features 3584 CUDA cores and 224 Tensor cores to accelerate matrix operations used in machine learning and deep learning.

The card also offers 24 GB of HBM2 memory with ECC and a bandwidth of up to 933 GB/s to process large data sets and advanced artificial intelligence models.

CUDA cores

Tensor cores

of HBM2 memory

memory bandwidth

Multi-Instance GPU (MIG) - flexible use of GPUs

The Multi-Instance GPU (MIG) technology allows a single accelerator to be split into several independent GPU instances. Each can handle separate compute workloads, providing resource isolation and predictable performance.

This enables the NVIDIA A30 to simultaneously support multiple AI applications or users in cloud and data centre environments.

GPU Applications in AI and Data Analytics

The NVIDIA A30 is widely used in artificial intelligence and data analytics environments where high parallel computing performance is critical.

LLM, chatbots, generative AI

VLM, image and text analytics

AI with enterprise knowledge base

big data and business analytics

scientific and engineering simulations

scalable GPU clusters

GPU for scalable server infrastructure

With passive cooling and PCIe 4.0 x16, the NVIDIA A30 is designed for high-density server and GPU clusters.

Maximum power consumption of 165 watts allows for efficient power usage while maintaining high computing performance.

Technical Specification

| FP64 | 5.2 teraFLOPS |

|---|---|

| FP64 Tensor Core | 10.3 teraFLOPS |

| FP32 | 10.3 teraFLOPS |

| TF32 Tensor Core | 82 teraFLOPS | 165 teraFLOPS* |

| BFLOAT16 Tensor Core | 165 teraFLOPS | 330 teraFLOPS* |

| FP16 Tensor Core | 165 teraFLOPS | 330 teraFLOPS* |

| INT8 Tensor Core | 330 TOPS | 661 TOPS* |

| INT4 Tensor Core | 661 TOPS | 1321 TOPS* |

| Media Engines | 1 Optical Flow Accelerator (OFA) 1 JPEG Decoder (NVJPEG) 4 Video Decoders (NVDEC) |

| GPU Memory | 24 GB HBM2 |

| GPU Memory Bandwidth | 933 GB/s |

| Interconnect | PCIe Gen4: 64 GB/s Third-gen NVLink: 200 GB/s** |

| Form Factor | Dual-slot, full-height, full-length (FHFL) |

| Max Thermal Design Power (TDP) | 165 W |

| Multi-Instance GPU (MIG) | 4 GPU instances @ 6 GB each 2 GPU instances @ 12 GB each 1 GPU instance @ 24 GB |

| Virtual GPU (vGPU) Software Support | NVIDIA AI Enterprise, NVIDIA Virtual Compute Server |

* With sparsity

** NVLink Bridge for up to two GPUs